Engram Memory Network: Brain-Inspired Prototype Explanations

Abstract

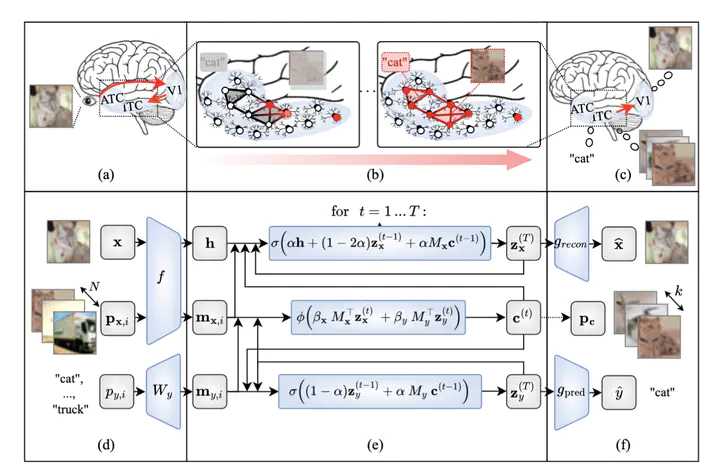

Artificial intelligence systems are increasingly being deployed in high-stakes decision-making scenarios. In response, prototype-based models in explainable AI have attracted increasing attention for their ability to retrieve visually similar examples as faithful explanations. Although these models achieve competitive classification accuracy, they often exhibit degraded explanation quality across datasets with varying characteristics, thereby limiting their practical applicability. To address this limitation, we draw on insights from cognitive neuroscience, particularly the human memory system, which encodes input-relevant information into representations that support recognition. In this paper, we propose an Engram Memory Network (EMN), a model that emulates the ventral visual stream and inferior temporal cortex in the human brain. Central to this model is a Hopfield-inspired memory module that iteratively retrieves representative prototypes. These memory-refined latents are used for both reconstruction and classification, encouraging the selected prototypes to capture input-relevant information across diverse datasets. To address the lack of standardized evaluation for prototype explanations, we assess three complementary aspects: perceptual similarity (LPIPS and DISTS) for perceived resemblance, subclass alignment, measuring whether prototypes match the input’s fine-grained category beyond supervised labels, and information preservation (NMI), measuring how much input information is captured by selected prototypes. Experiments show that EMN improves visual similarity by 14.84%, subclass alignment by 104.57%, and NMI by 63.86% on average across CIFAR-10/100, CIFAR-100S, MNIST, HAM10000, and CUB-200, at the cost of a modest 0.57% point drop in classification accuracy relative to the best baseline. These findings underscore the effectiveness of neuroscience-inspired explanation mechanisms in advancing the interpretability and reliability of prototype-based models.