Stable and Scalable Deep Predictive Coding Networks with Meta Prediction Errors

Abstract

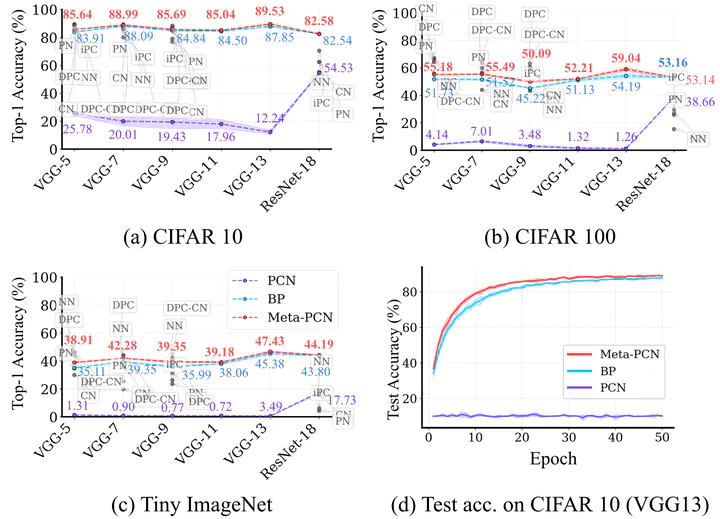

Predictive Coding Networks (PCNs) offer a biologically inspired alternative to conventional deep neural networks. However, their scalability is hindered by severe training instabilities that intensify with network depth. Through dynamical mean-field analyses, we identify two fundamental pathologies that impede deep PCN training: (1) prediction error (PE) imbalance that leads to uneven learning across layers, characterized by error concentration at network boundaries; and (2) exploding and vanishing prediction errors (EVPE) sensitive to weight variance. To address these challenges, we propose Meta-PCN, a unified framework that incorporates two synergistic components: (1) a loss based on meta-prediction error, which minimizes PEs of PEs to linearize the nonlinear inference dynamics; and (2) weight regularization that employs normalization to regulate weight variance and mitigate EVPE. Extensive experimental validation on CIFAR-10/100 and TinyImageNet demonstrates that Meta-PCN achieves statistically significant improvements over conventional PCNs, outperforming backpropagation in most tested configurations, while preserving the local learning rules of PCNs.